Golf Analytics

Operating System

Golf analytics that

reflects how I think.

The publicly available golf analytics tools are either too surface-level for serious weekly analysis or too generic to reflect any individual point of view. I wanted something that thought about golf the way I do.

Existing feeds like DataGolf provide strong statistical baselines — but they don't have my opinion layered on top. They don't know that I weight course fit differently for a major. They don't have my archetype taxonomy. They don't generate my Pick6 line estimates or build lineups using my leverage philosophy.

More practically: I was spending tournament weeks jumping between five different tools with no single surface connecting my player profiles to my predictions, my DFS stack, and my content workflow.

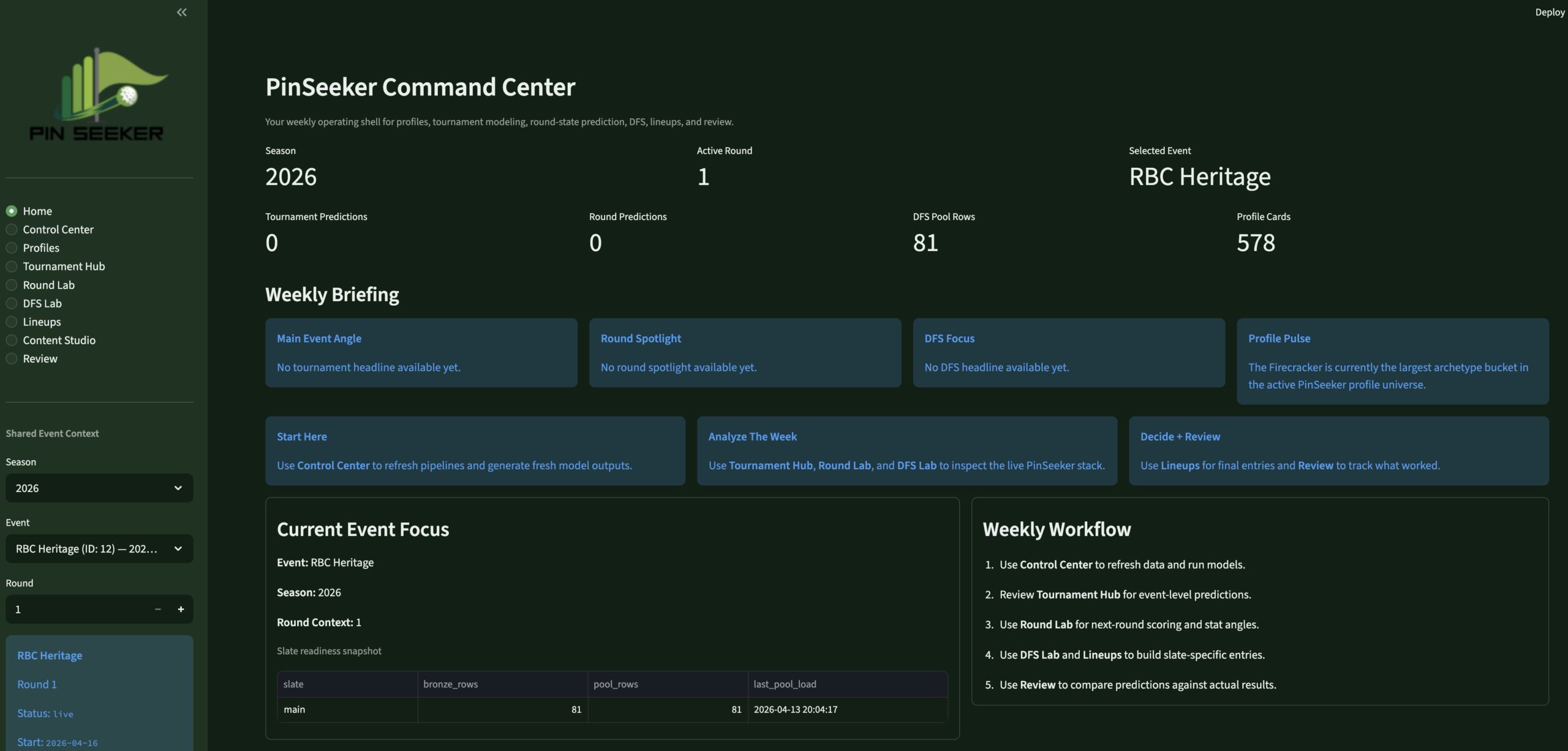

PinSeeker is the system I built so I never have to do that again. One warehouse. One app. My own analytical layer on top of the best available data.

Every layer. One person.

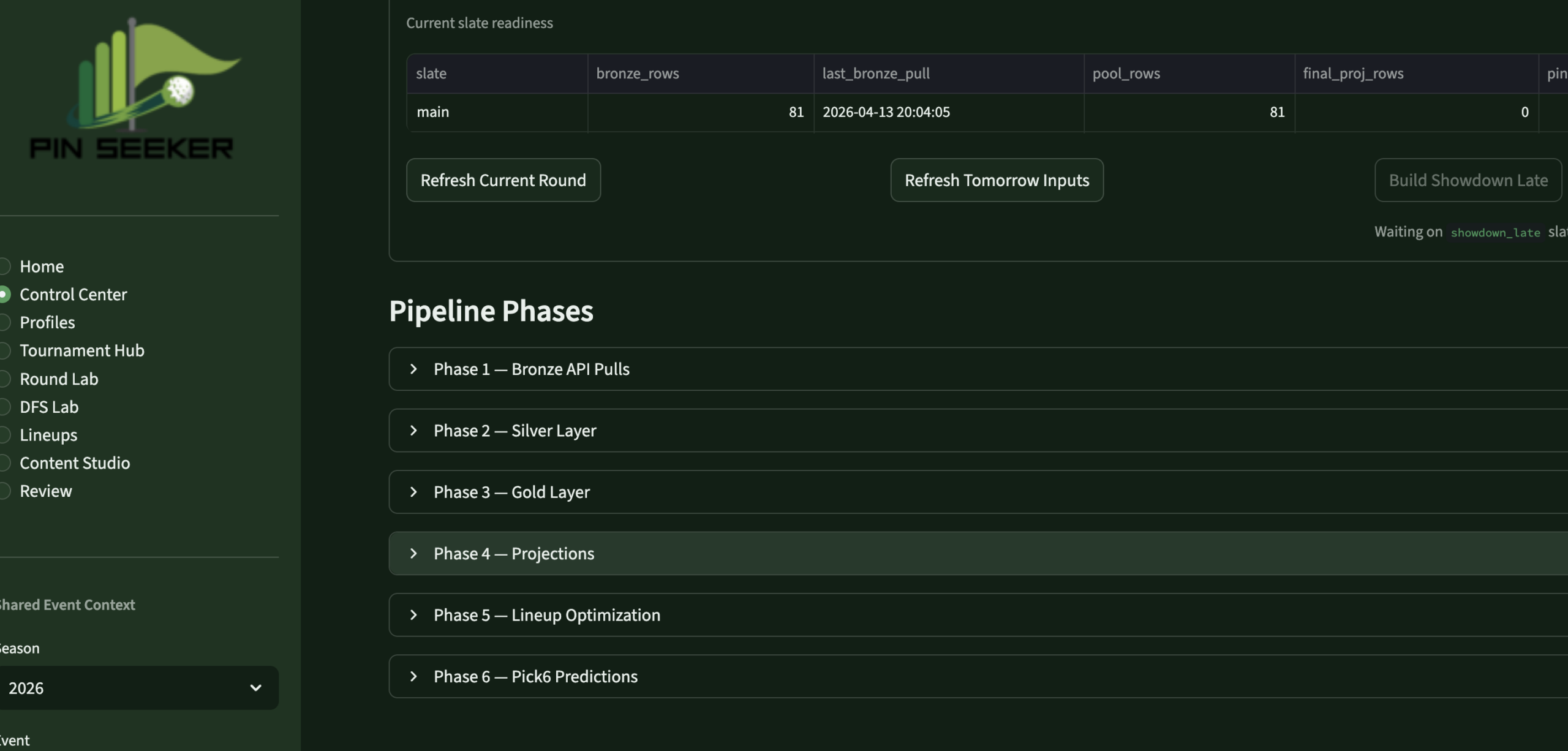

- Designed the full MySQL medallion warehouse — Bronze (raw API), Silver (clean/structured), Gold (analytics-ready), plus ops and models schemas. 14+ active DDL files in the 2026 rebuild.

- Built all Python ETL pipelines from the DataGolf API — automated via Mac cron for live tournament refreshes and pre-tournament pulls

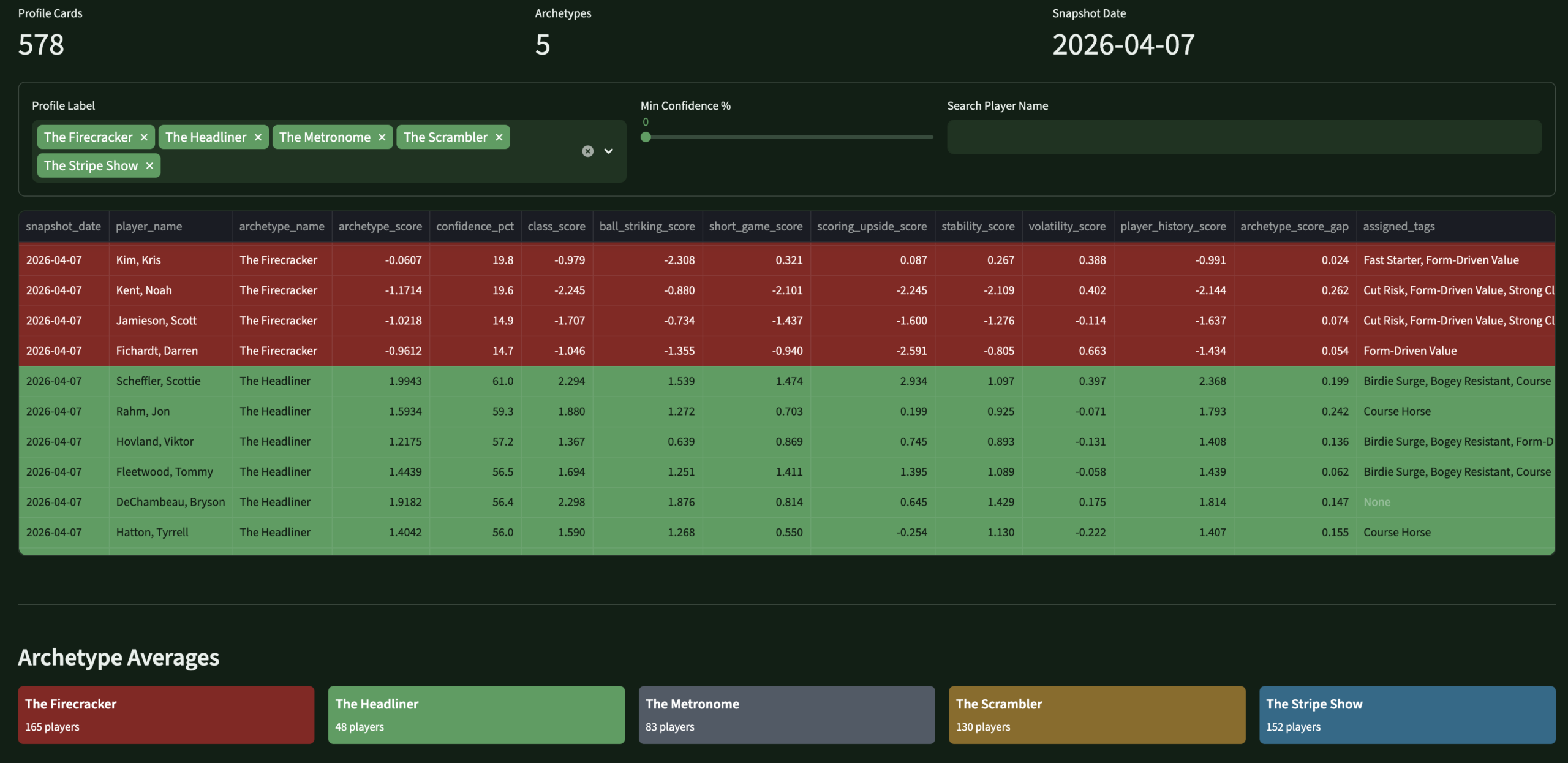

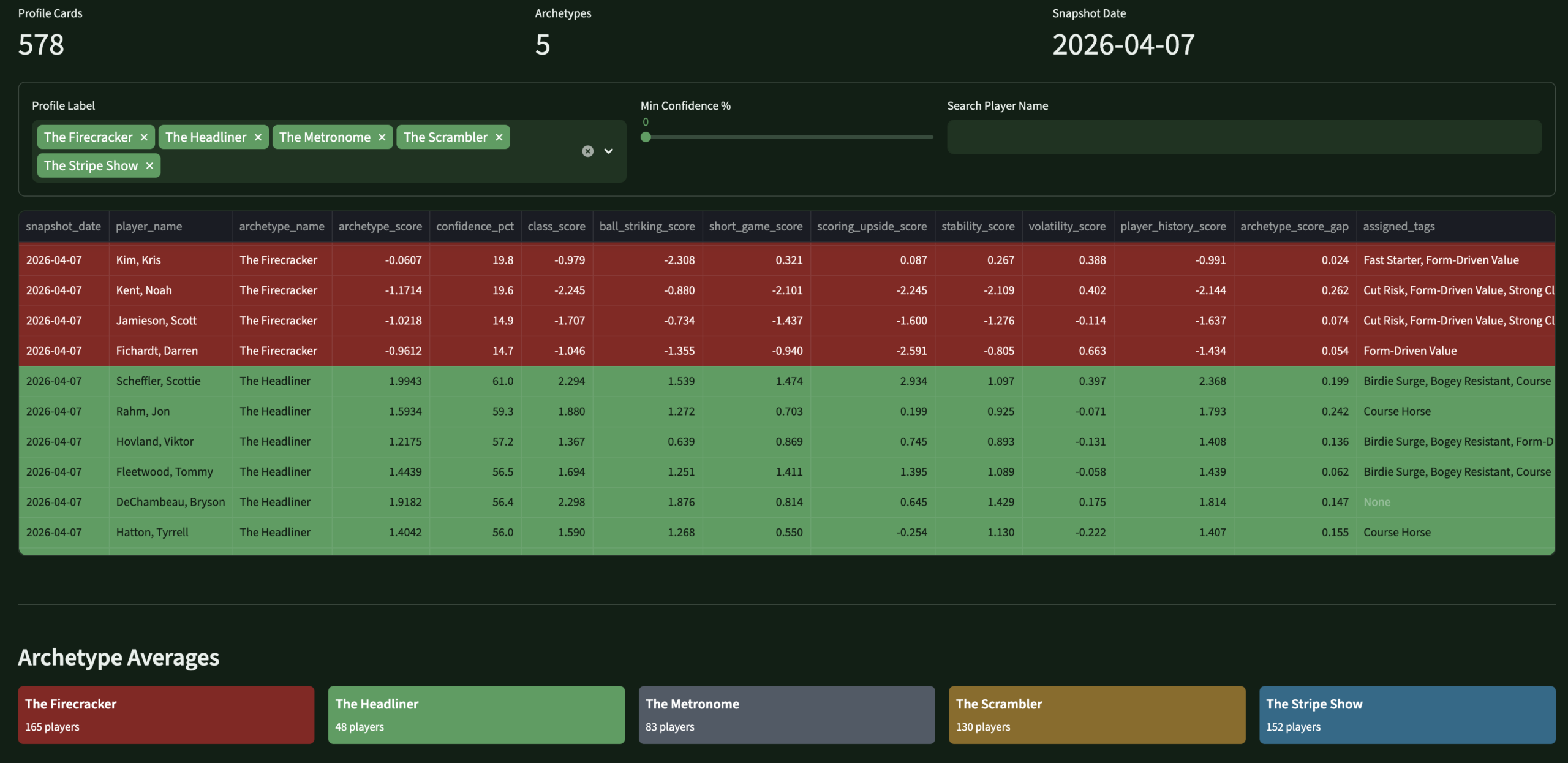

- Created the PinSeeker Player Identity System — 5 branded archetypes, 12 trait tags, 6 analyst flags — versioned, scored, append-only history

- Built the full prediction stack — tournament outcomes (win/top10/cut), round-level scoring, stat predictions (birdies, pars, bogeys, eagles) with PinSeeker rule layers on top of DataGolf baselines

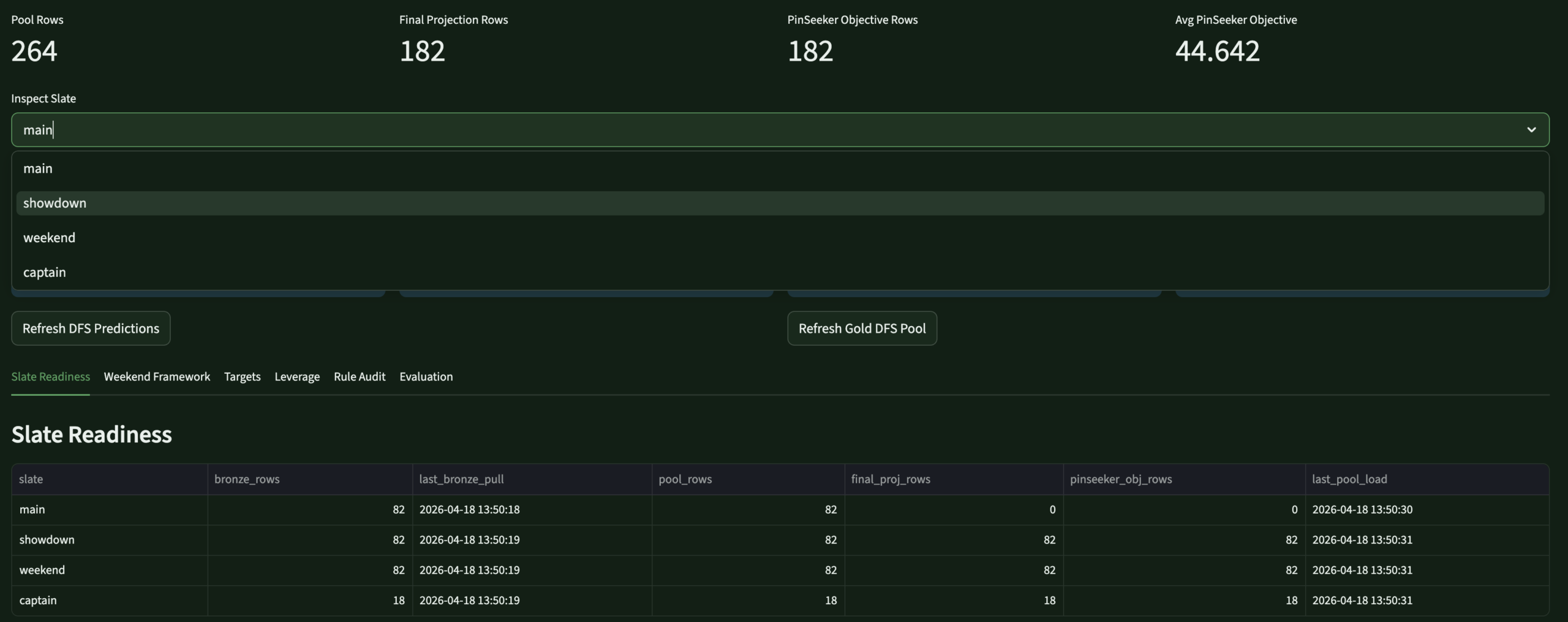

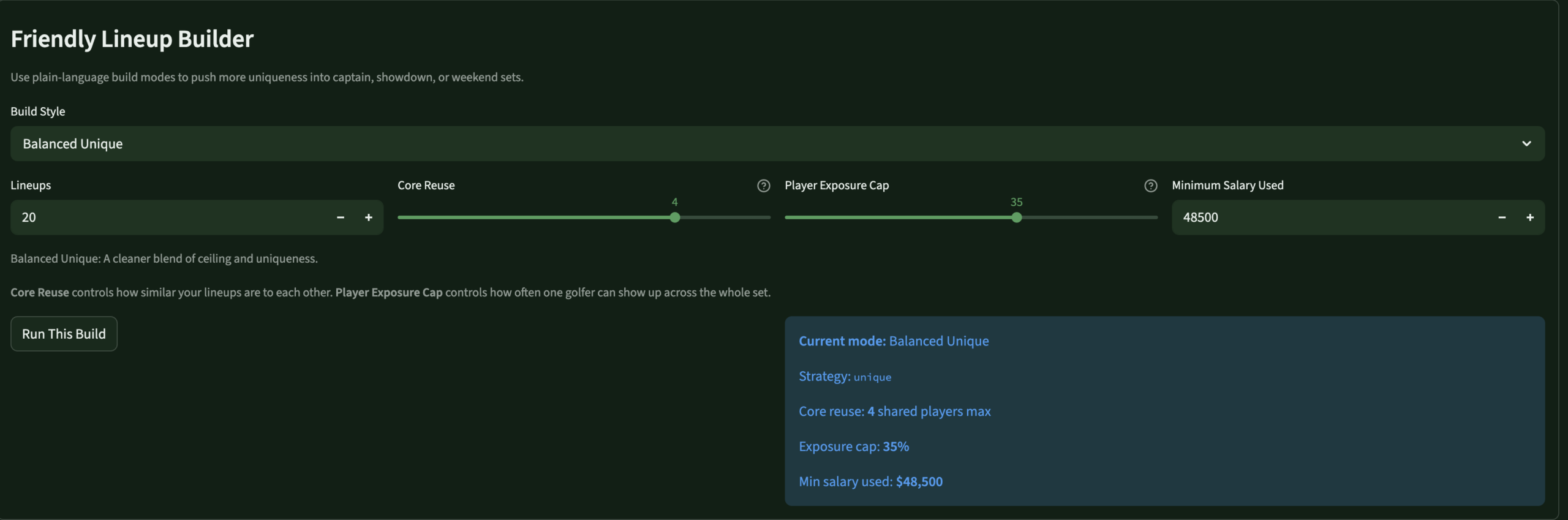

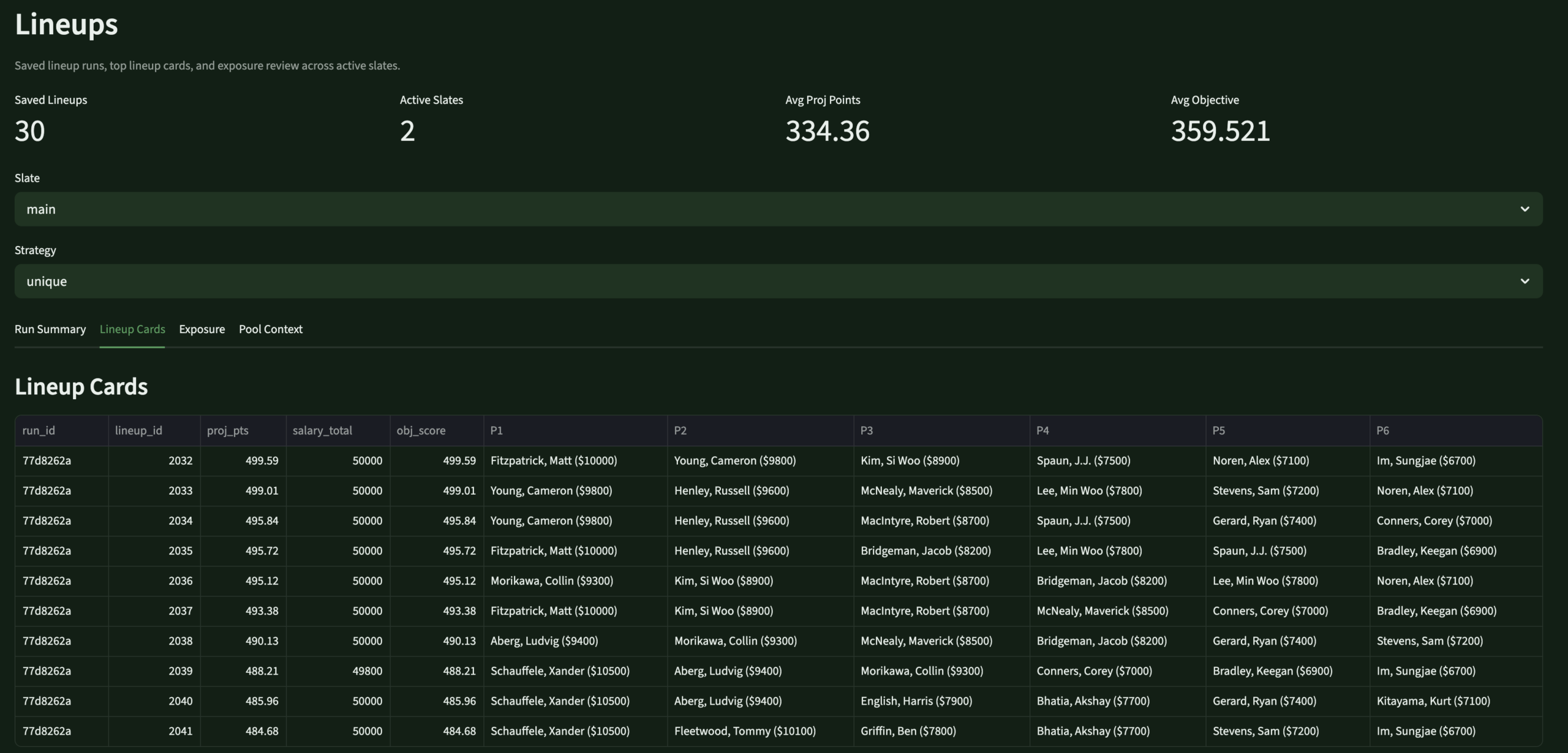

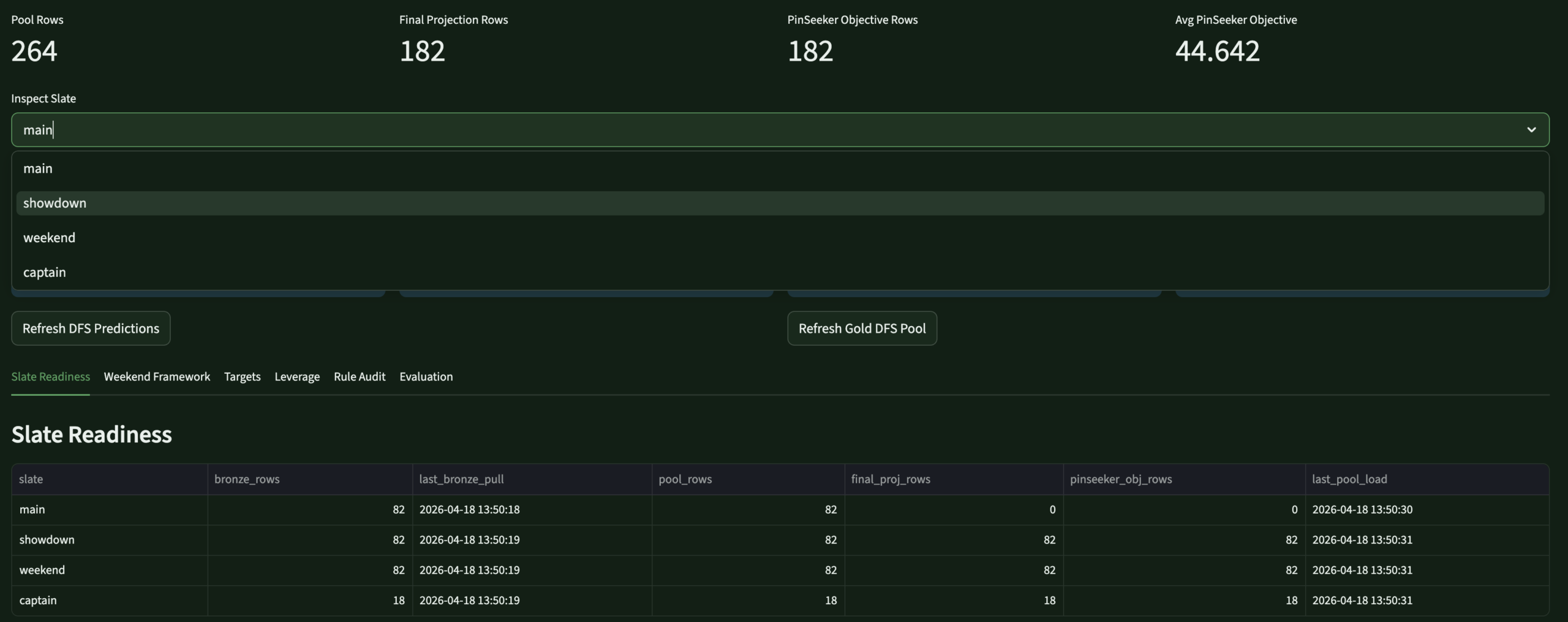

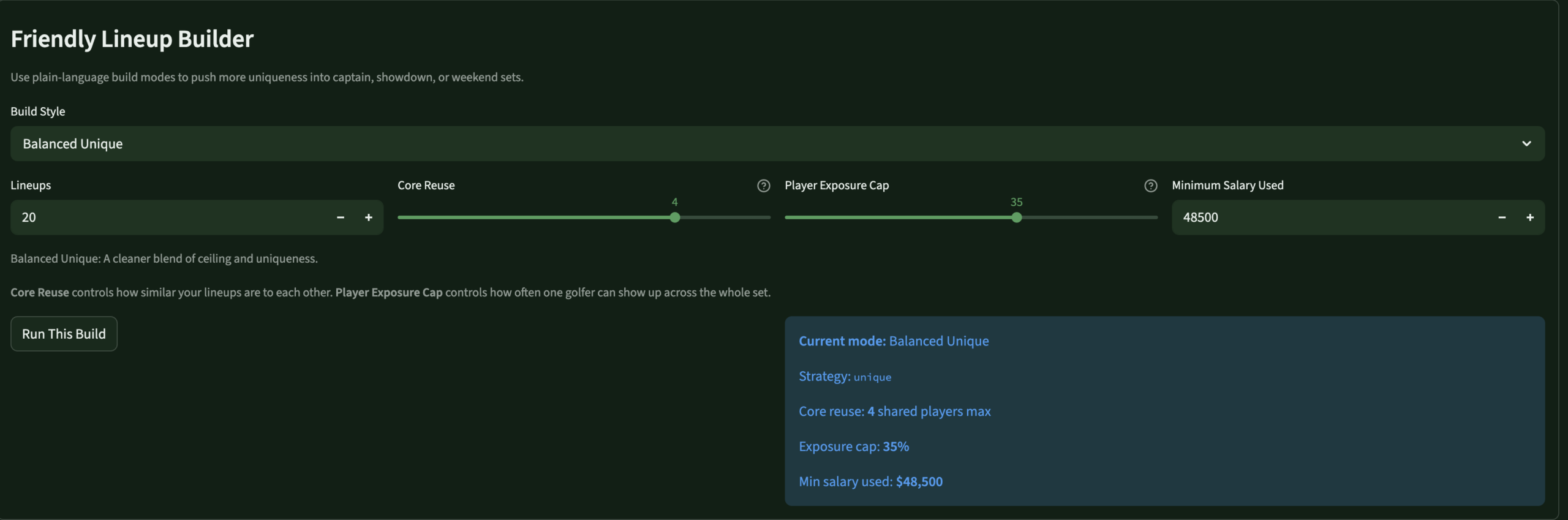

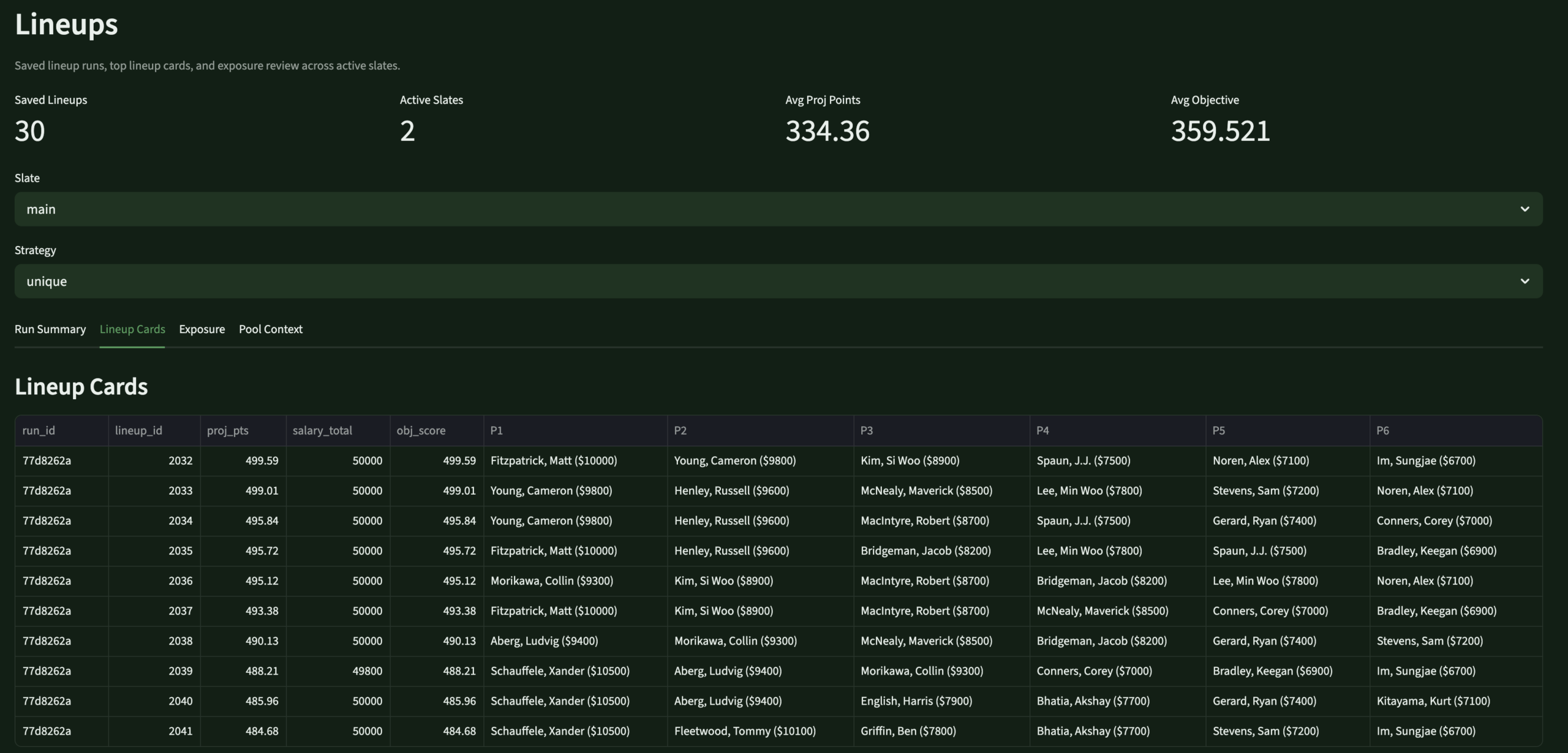

- Designed the DFS intelligence layer — 60/40 source/PinSeeker projection blend, archetype-aware rule engine, lineup optimizers for all DraftKings slates

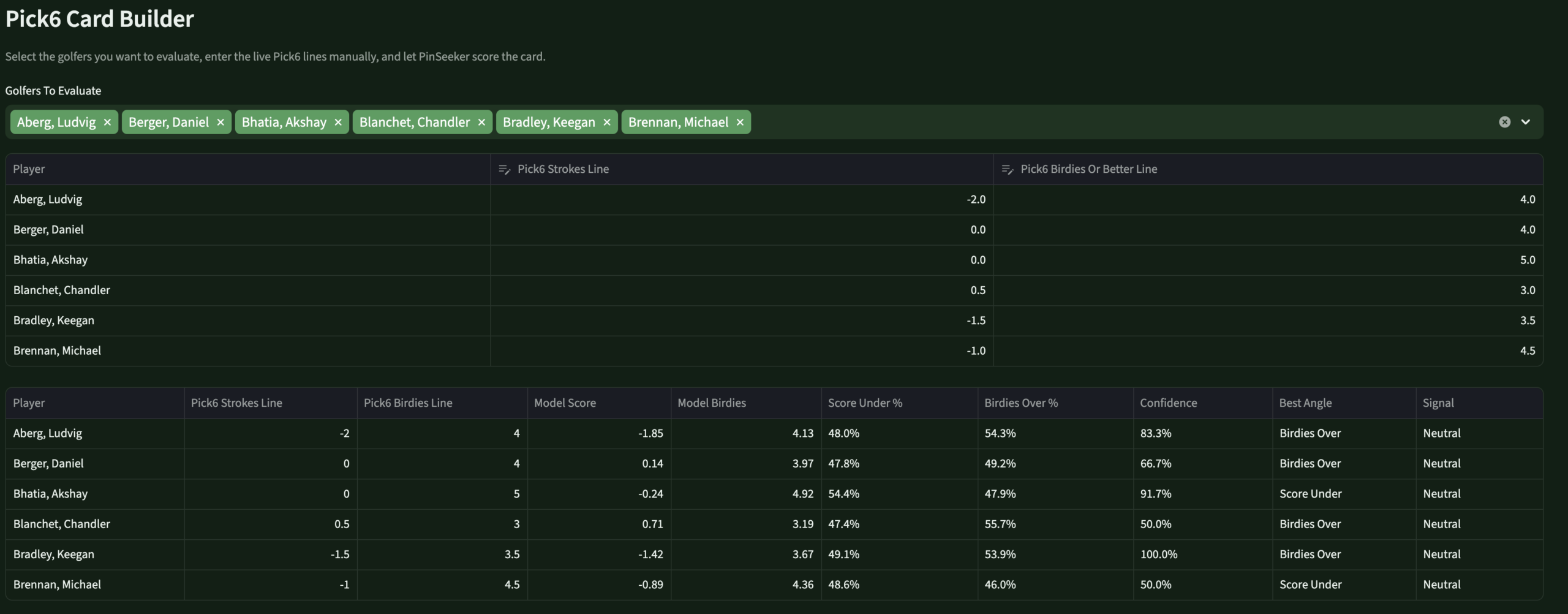

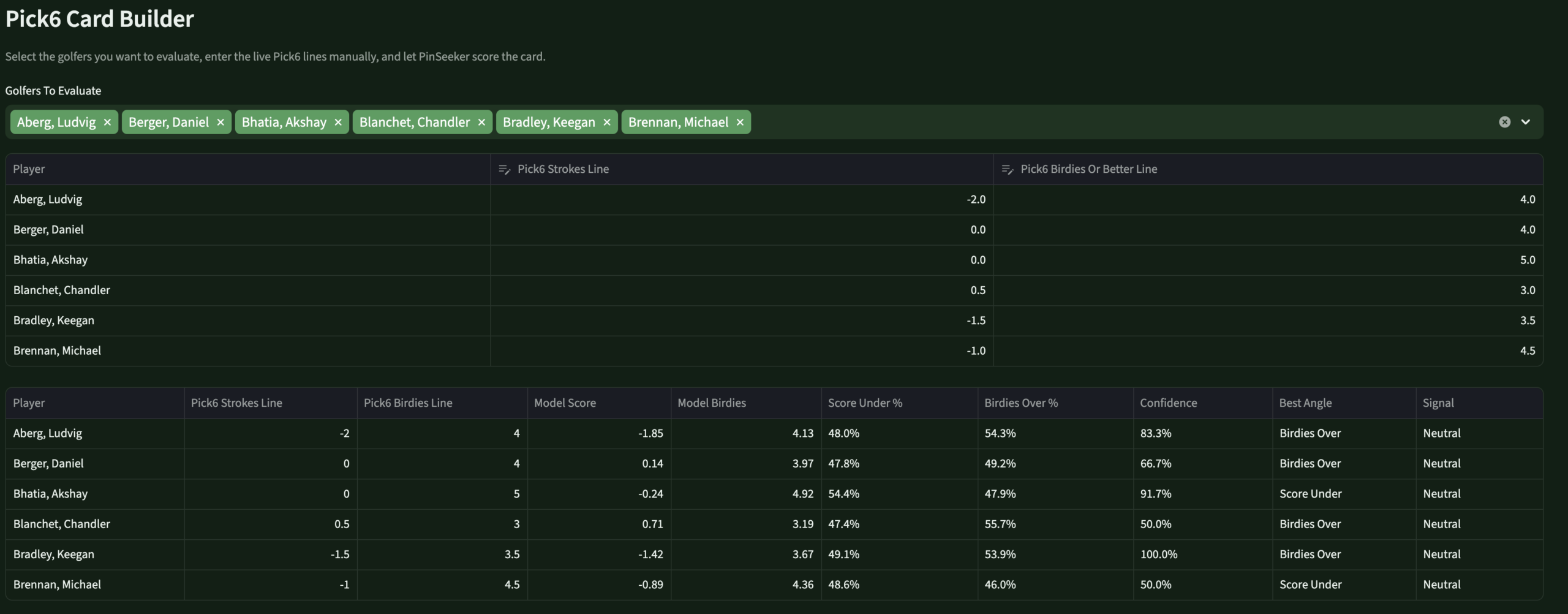

- Built the Pick6 decision engine — PinSeeker-generated lines and over/under probabilities without depending on bookmaker lines

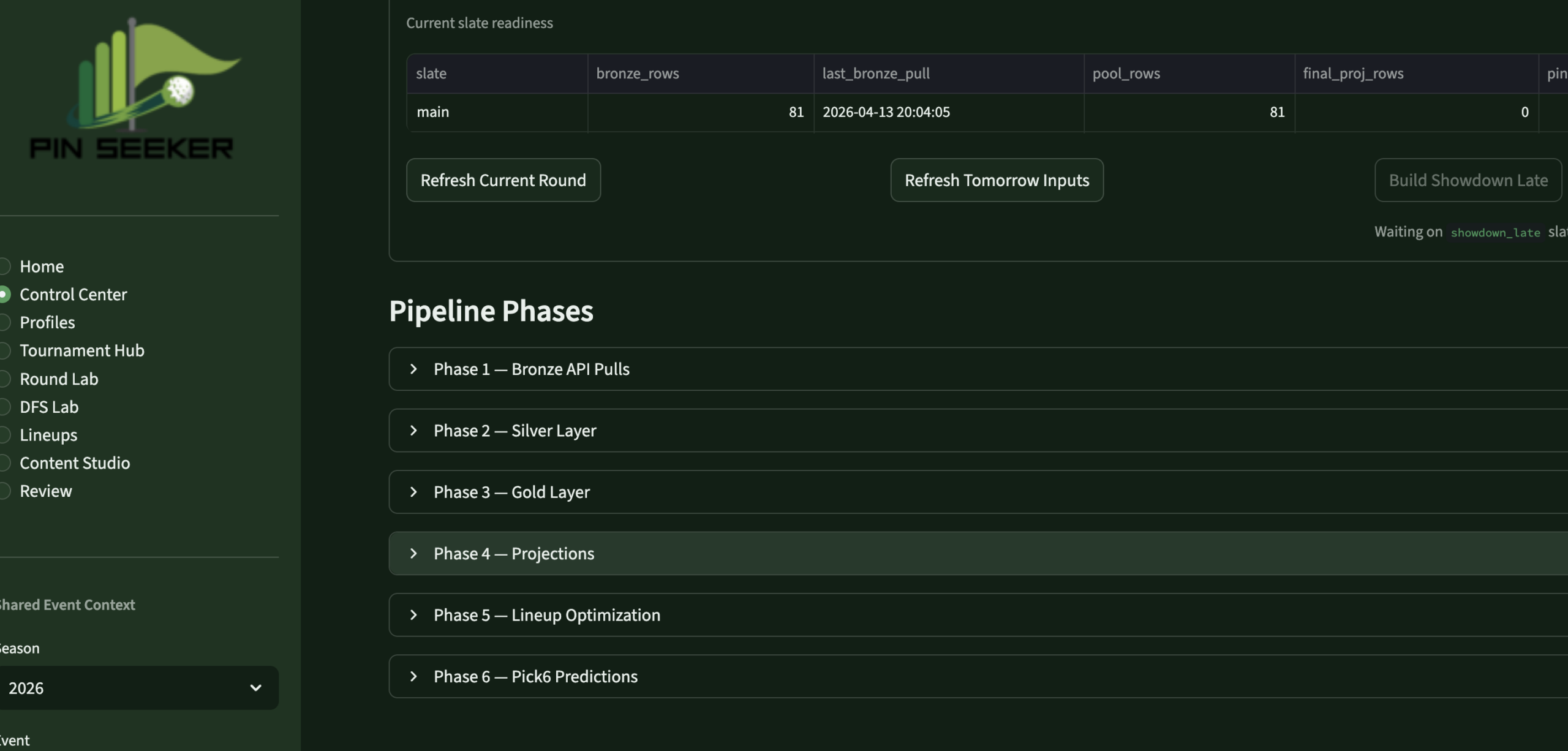

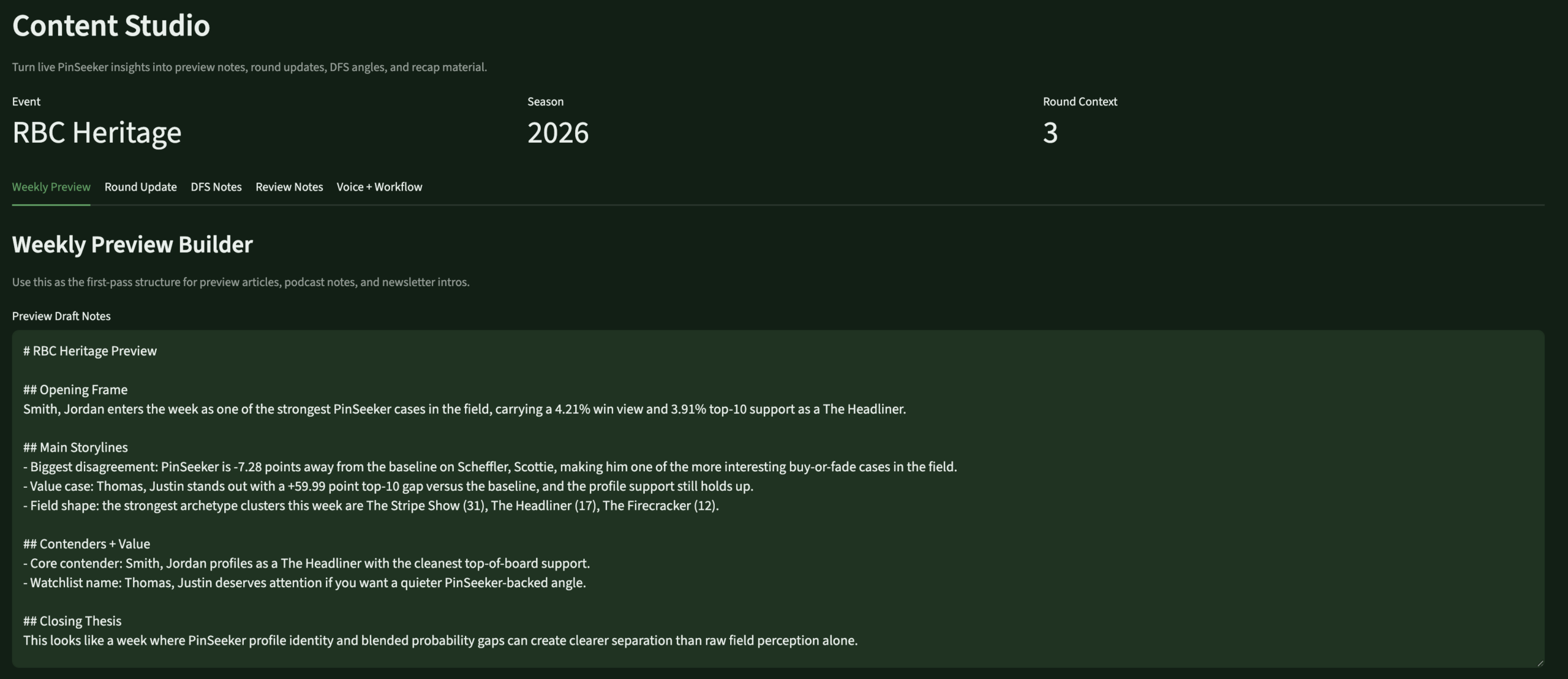

- Built the 8-page Streamlit app — weekly operating system: Home, Control Center, Profiles, Tournament Hub, Round Lab, DFS Lab, Lineups, Review

- Designed the evaluation framework — Brier score, MAE, calibration tracking, model version comparison across all prediction families

- Built for one user — every UX decision, every data model choice, every archetype definition reflects my own analytical framework, not a market of users

- Versioned and auditable throughout — every prediction family ties to a model run ID, every rule adjustment is traceable, every profile snapshot is append-only history

- Backend-first, app second — the warehouse was kept mature before the app was redesigned; the app is a surface over the data, not a logic engine

- Iterative by design — new archetype rules, Masters special event modes, and prediction tuning are treated as versioned business logic changes, not ad hoc overrides

- Personally operated weekly — I refresh it, run it, review it, and tune it every tournament week during the PGA Tour season

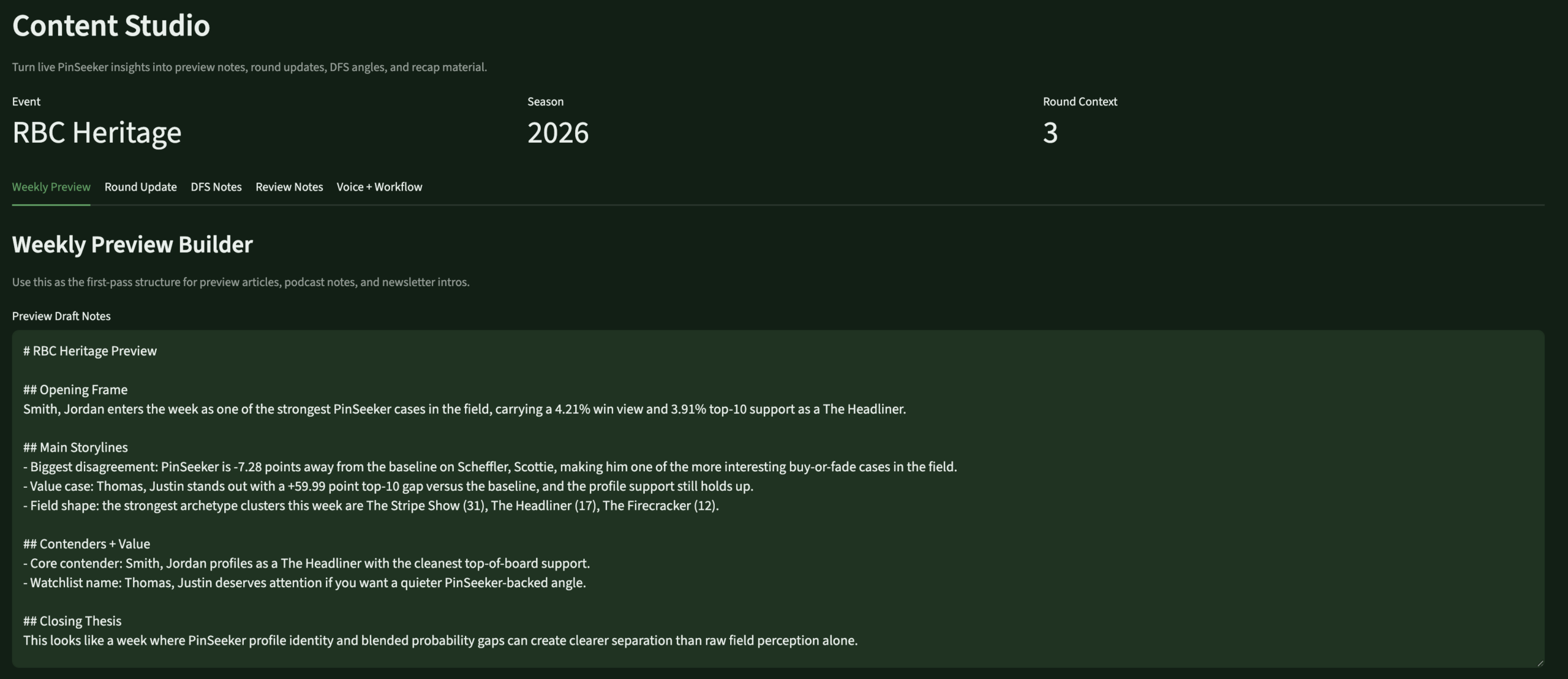

- Content-ready — a dedicated Content Studio page surfaces talking points, blog prep notes, and insight cards directly from the live warehouse

A weekly operating

sequence, not a script

Five layers — each cleanly separated so predictions, identity, and decisions all come from the same source of truth.

Tools & system design

Five core technologies and a full system diagram showing how data flows from the API through the warehouse to the app decision surfaces.

Five archetypes.

My own taxonomy.

Every active PGA Tour player gets a primary archetype weekly — scored against versioned component formulas, stored as append-only history. These flow into predictions, DFS scoring, content framing, and lineup logic. They're the thing that makes PinSeeker distinctly mine.

Eight pages. One weekly flow.

The Streamlit app is the operating surface I actually use every tournament week — not a demo. It runs locally, reads from the live warehouse, and moves me through the full decision sequence in one place.

The stat sheet

Key decisions & tradeoffs

The architectural choices that shaped PinSeeker — and what building for yourself, rather than for a market, changes about each one.

| Decision | Rationale | Tradeoff |

|---|---|---|

| Personal use only | Building for one real user — myself — forced every design decision to be genuinely useful rather than impressive. There's no “will users understand this?” question. If it works for my workflow, it ships. | Maximum relevance — every feature exists because I actually needed it. The evaluation framework exists because I genuinely want to know if I was right, not because it demos well. |

| Backend-first, app second | The warehouse was kept mature before the app was redesigned. Full medallion architecture, versioned logic, auditable predictions — all in place before the Streamlit surface was rebuilt. | Slower to usable UI — months of warehouse work before the app felt right. The right tradeoff: an app over messy data would have been worse than no app. |

| Versioned rule layers | Every prediction adjustment is versioned, stored, and auditable. I can trace exactly what changed for any prediction in any week. A black-box system is unimprovable. | More engineering overhead — faster to hardcode. But versioned rules are the only way to know if the system is actually getting better. |

| 60/40 DFS blend | Rather than fully trusting external DFS projections or overriding them entirely, the stack blends 60% source with 40% PinSeeker-generated projection. My opinion matters without discarding a strong baseline. | Testable — the evaluation framework compares source-only, PinSeeker-only, and blended MAE. I'll know if the ratio should shift after a full season. |

| Pick6 without book lines | DraftKings Pick6 lines aren't reliably available in advance. PinSeeker generates its own best line and uses those as the reference for over/under probabilities — useful whether or not an actual line ever shows up. | No edge confirmation — I can't say “PinSeeker is 15% above the market” without a market line. But knowing what PinSeeker would set reveals where my model is extreme — the actual decision surface. |

| Masters special mode | The Masters requires fundamentally different prediction logic. Rather than patching the standard weekly process, a dedicated overlay layer sits on top without touching base outputs — preserving everything and adding Augusta-specific signals cleanly. | Reusable for all majors — the event mode architecture (standard / masters_major_mode / pga_major_mode) extends cleanly to every major. Building it right once is cheaper than rebuilding four times a year. |

Screenshot gallery

The app in operation — every page from the weekly workflow. Click any screenshot to expand.